I am Zehadul Islam

.png)

.png)

I am a Computer Science and Engineering undergraduate at Green University of Bangladesh with a strong focus on Artificial Intelligence, Machine Learning, and data-driven system design, dedicated to transforming theoretical concepts into practical, real-world AI solutions.

I have hands-on experience working on machine learning-based academic projects, including data preprocessing, supervised model training, evaluation, and explainable AI (SHAP), alongside developing secure, role-based full-stack web applications with REST API integration. I've built 5+ ML-based projects focusing on predictive analytics and model explainability.

I actively practice competitive programming, solving 400+ algorithmic problems on platforms like Codeforces, AtCoder, and CodeChef, which have strengthened my algorithmic thinking and problem-solving ability. I am seeking internship or entry-level opportunities to grow as an AI/ML-focused software engineer and researcher.

B.Sc. Computer Science & Engineering

Green University of Bangladesh

2022 - Present

Higher Secondary Certificate (HSC)

Hajigonj Model Govt. College

2018 - 2020

Technologies I Work With

Core: Python, C++ | Intermediate: Java, JavaScript | Familiar: PHP

Python

JavaScript

Java

C/C++

PHP

Advanced: Python ML Stack | Intermediate: TensorFlow, SHAP (XAI)

TensorFlow

Scikit-learn

Pandas

SHAP (XAI)

HTML/CSS

Bootstrap

MySQL

Daily Use: Git/GitHub, VS Code, Linux | Advanced: Docker, Jupyter, Postman

VS Code

Docker

Jupyter

Linux

Postman

Codeforces

AtCoder

CodeChef

Git

LaTeX

MS Office

Lucidchart

Canva

A showcase of my technical projects and innovative solutions

Advanced Python data analysis tool enabling researchers to parse, explore, and visualize ARFF datasets with intelligent decision tree generation, comprehensive attribute analysis, dynamic filtering capabilities, and statistical summaries for machine learning research and academic data science workflows.

Healthcare crowdfunding platform enabling secure communication between patients seeking medical assistance, donors contributing funds, and administrators managing campaigns with granular role-based access control, real-time donation tracking, and transparent fund allocation for medical emergencies.

My professional journey

Apr 2023 - Feb 2024

Oct 2021 - Jun 2022

My academic background

2022 - Present

Green University of Bangladesh

Focus: AI/ML, Web Development, Software Engineering

2018 - 2020

Hajigonj Model Govt. College

Bondstein Technologies & ICT Division (Bangladesh)

Comprehensive training in Artificial Intelligence, Machine Learning, and IoT technologies

Tools, frameworks, and technologies I work with

Ongoing research and development

Conducting comprehensive research on applying machine learning models to neonatal clinical data to predict adverse drug effects. Integrating SHAP-based explainable AI to ensure model transparency and clinical relevance.

Sharing knowledge and insights on AI, ML, and Software Development

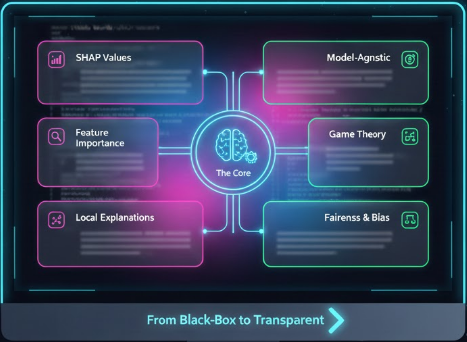

Exploring Shapley Additive Explanations (SHAP) for interpreting complex machine learning models in healthcare applications. Learn how SHAP values help understand feature importance and model decisions in clinical settings.

Read More

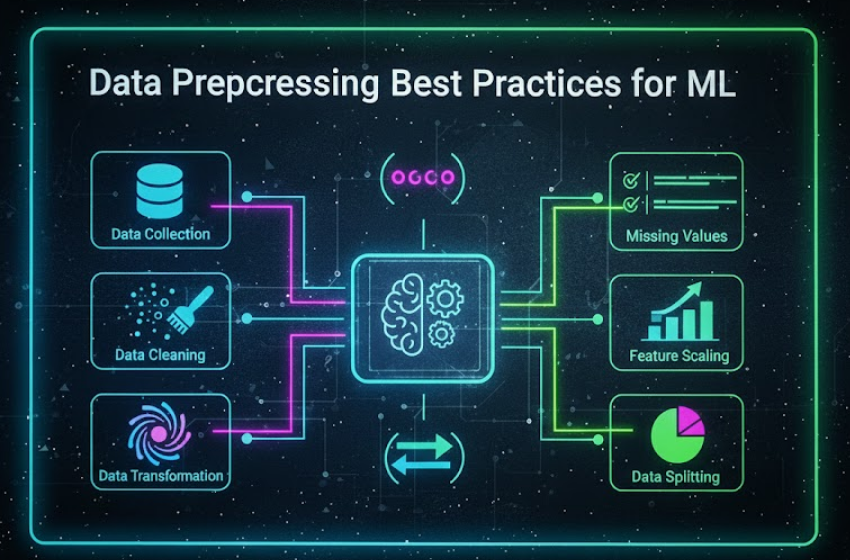

A comprehensive guide to data cleaning, feature engineering, and preprocessing techniques using Pandas and Scikit-learn. From handling missing values to feature scaling and encoding categorical variables.

Read More

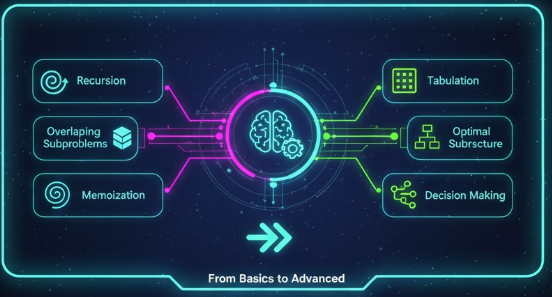

Master dynamic programming concepts with real-world examples from competitive programming. Covering memoization, tabulation, and optimization techniques to solve complex algorithmic challenges efficiently.

Read MoreGet a complete overview of my qualifications, professional experience, academic background, and achievements in AI/ML and software development.

Ready to bring your ideas to life? Let's discuss how we can work together to create something extraordinary.

Typically respond in 24 hours, sometimes much faster!

Fill out the form below and I'll get back to you soon.