Data preprocessing is often said to consume 80% of a data scientist's time, yet it's the foundation of any successful machine learning project. Poor data quality leads to poor model performance.

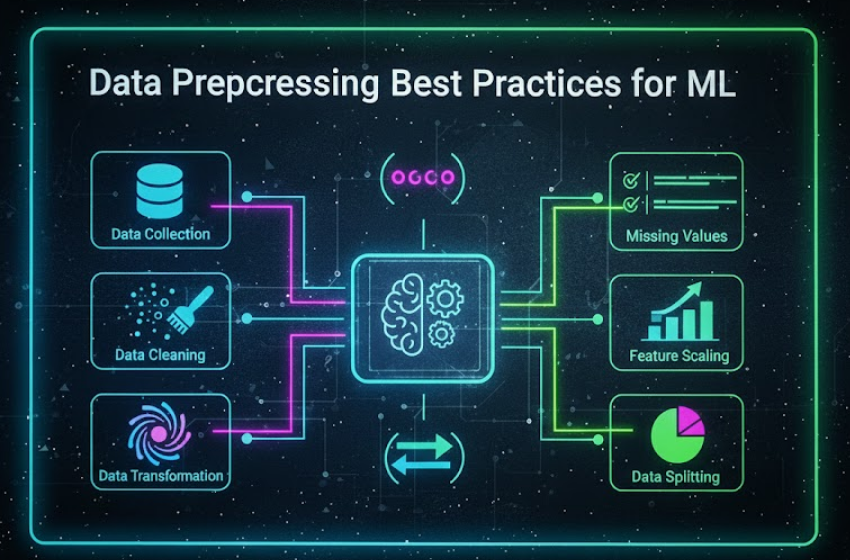

The Complete Data Preprocessing Pipeline

- Data Collection & Understanding

- Data Cleaning

- Feature Engineering

- Feature Scaling

- Feature Selection

1. Handling Missing Values

import pandas as pd

import numpy as np

# Check missing values

print(df.isnull().sum())

# Drop rows with missing values

df_clean = df.dropna()

# Fill with mean/median/mode

df['age'].fillna(df['age'].median(), inplace=True)

# Forward fill for time series

df['price'].fillna(method='ffill', inplace=True)

# Advanced: Multiple Imputation

from sklearn.experimental import enable_iterative_imputer

from sklearn.impute import IterativeImputer

imputer = IterativeImputer(random_state=0)

df_imputed = pd.DataFrame(imputer.fit_transform(df), columns=df.columns)2. Handling Outliers

# IQR Method

Q1 = df['price'].quantile(0.25)

Q3 = df['price'].quantile(0.75)

IQR = Q3 - Q1

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

df_clean = df[(df['price'] >= lower_bound) & (df['price'] <= upper_bound)]

# Z-Score Method

from scipy import stats

z_scores = np.abs(stats.zscore(df['price']))

df_clean = df[z_scores < 3]3. Feature Encoding

# Label Encoding

from sklearn.preprocessing import LabelEncoder

le = LabelEncoder()

df['category_encoded'] = le.fit_transform(df['category'])

# One-Hot Encoding

df_encoded = pd.get_dummies(df, columns=['category'])

# Target Encoding (for high cardinality)

category_means = df.groupby('category')['target'].mean()

df['category_encoded'] = df['category'].map(category_means)4. Feature Scaling

from sklearn.preprocessing import StandardScaler, MinMaxScaler, RobustScaler

# Standardization (mean=0, std=1)

scaler = StandardScaler()

X_scaled = scaler.fit_transform(X)

# Normalization (range 0-1)

scaler = MinMaxScaler()

X_normalized = scaler.fit_transform(X)

# Robust Scaling (uses median, robust to outliers)

scaler = RobustScaler()

X_robust = scaler.fit_transform(X)Common Pitfalls to Avoid

- Data Leakage: Never use test data information during preprocessing

- Overfitting: Don't create too many features without validation

- Ignoring Class Imbalance: Use SMOTE, undersampling, or class weights

- Not Saving Preprocessors: Always save scalers and encoders for production